Extra site visitors will have to imply extra good fortune, however in follow, it frequently doesn’t. Many web sites see emerging seek advice from counts whilst conversions, engagement, and income stay flat, leaving groups questioning why “enlargement” doesn’t really feel like enlargement in any respect.

One explanation why is that now not all site visitors represents genuine other people. Computerized job now makes up a big proportion of the fashionable internet. Actually, the 2025 Imperva Unhealthy Bot Record discovered that automatic techniques accounted for 51% of all internet site visitors in 2024, which means bots jointly generated extra requests than human guests for the primary time in a decade.

When automatic site visitors mixes into analytics reviews, uncooked seek advice from counts by myself grow to be an unreliable measure of genuine target market passion or call for.

This text explains the right way to distinguish between authentic web site guests, useful automation, and destructive bot job.

Contents

- 1 What bot site visitors in fact is

- 2 The 3 sorts of site visitors hitting your web site

- 3 Actual guests: What human site visitors seems like

- 4 Useful bots: Automation that helps your web site

- 5 Destructive bots: Site visitors that creates chance or waste

- 6 How you can inform people, useful bots, and destructive bots aside

- 7 The place to investigate bot site visitors

- 8 How bot site visitors distorts analytics and decision-making

- 9 Easiest practices for managing several types of site visitors

- 10 Use site visitors supply data to make higher choices

What bot site visitors in fact is

Bot site visitors refers to requests made by way of automatic device fairly than by way of a human the use of a browser. Those methods ship requests to internet pages, pictures, scripts, or APIs in the similar approach a customer’s browser would, however the job occurs with out direct human interplay.

From a technical perspective, the server frequently sees the similar form of request. The variation lies in how the request is generated and the way it behaves through the years.

Automation isn’t extraordinary or inherently destructive. A lot of the web depends upon automatic techniques that often move slowly web sites, test uptime, validate efficiency, or retrieve information for reliable services and products. Engines like google depend on bots to find and index new content material, tracking gear ceaselessly take a look at availability, and quite a lot of integrations question APIs to stay packages synchronized.

Importantly, the phrase “bot” describes how the site visitors is generated, now not why it exists. Some automatic techniques fortify visibility and safety, whilst others try to exploit vulnerabilities, scrape content material, or crush infrastructure. As a result of intent varies extensively, figuring out and classifying bot habit is way more helpful than treating all automatic site visitors as a unmarried class.

The 3 sorts of site visitors hitting your web site

Web page site visitors is frequently mentioned as a easy break up between “human” and “bot,” however in fact, maximum requests fall into 3 sensible classes: genuine guests, useful bots, and destructive bots. Figuring out this difference makes it more uncomplicated to interpret analytics, arrange sources, and follow the appropriate safety controls with out disrupting reliable job.

As we discussed previous, the Imperva Unhealthy Bot Record famous that automatic site visitors accounted for greater than part of all internet requests globally, with a considerable portion categorised as both advisable automation or malicious bot job. When those other resources are blended, site visitors quantity by myself supplies little perception into genuine consumer call for or engagement.

The objective isn’t to dam the rest that looks automatic, however to spot which requests are from genuine other people, which fortify web site capability and visibility, and which create chance or useless load.

Inspecting habit patterns, request traits, and site visitors resources can provide the readability had to permit advisable automation, offer protection to towards destructive job, and evaluation efficiency the use of information that displays authentic consumer habit.

Actual guests: What human site visitors seems like

Human site visitors has a tendency to practice abnormal, unpredictable patterns. Actual guests transfer via websites in numerous techniques. They click on other navigation paths, pause on positive pages, scroll at other depths, and spend inconsistent quantities of time ahead of taking the following motion. Even if a couple of guests arrive from the similar marketing campaign or area, their habit hardly ever follows an identical sequences.

Unique consumer classes additionally come with sensible interplay patterns. Movements like on-site searches, shape submissions, media playback, account logins, or e-commerce job generally happen in logical progressions fairly than in completely timed or repeated durations. The timing between requests varies naturally, reflecting how other people learn, assume, and come to a decision what to do subsequent.

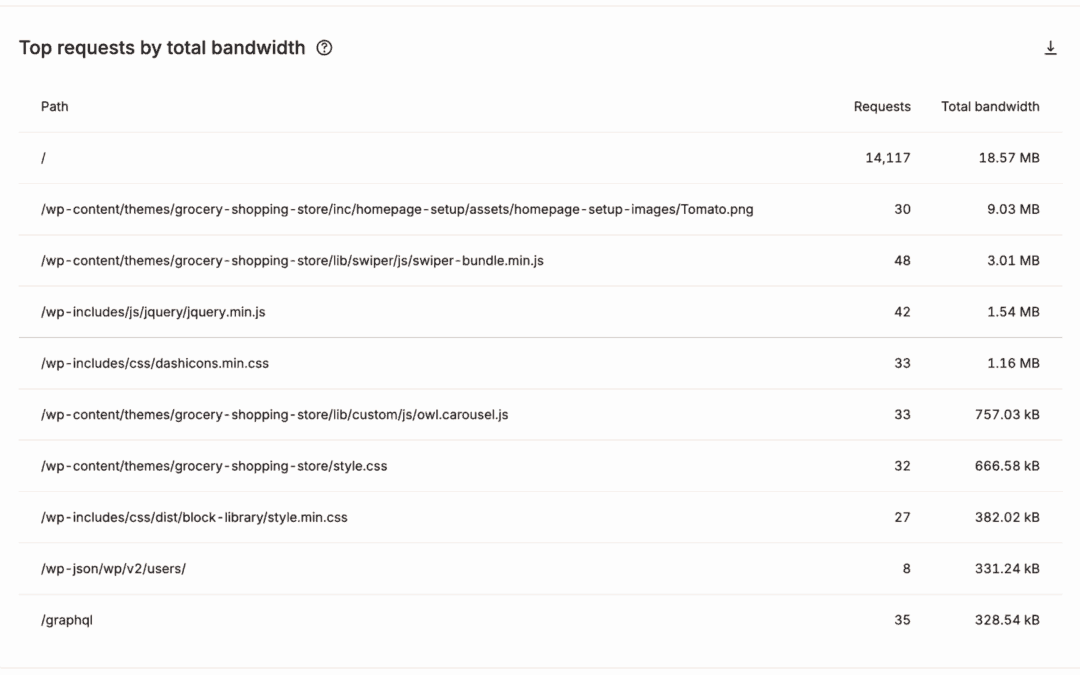

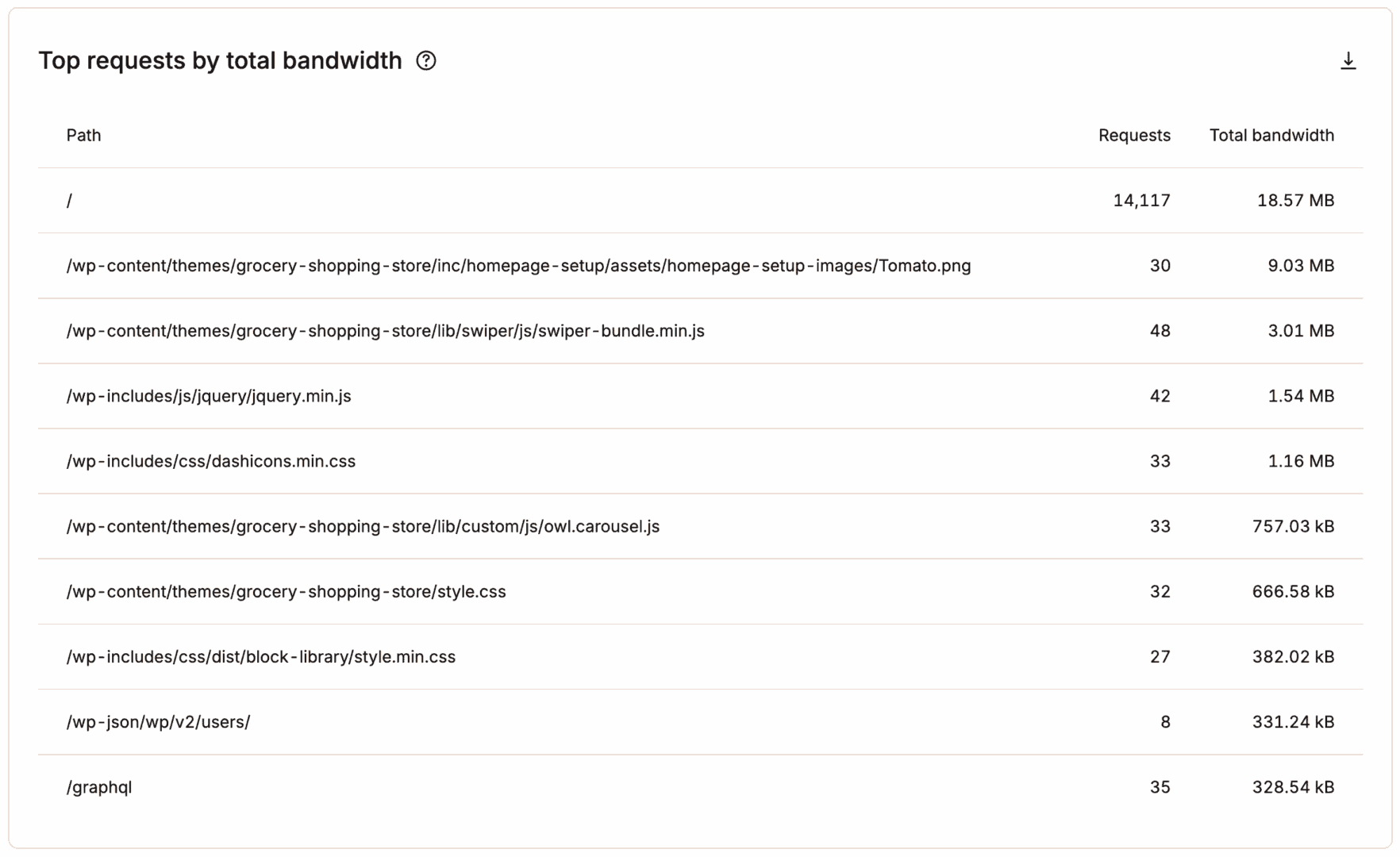

With MyKinsta, you’ll temporarily see which pages are getting essentially the most site visitors, at a look:

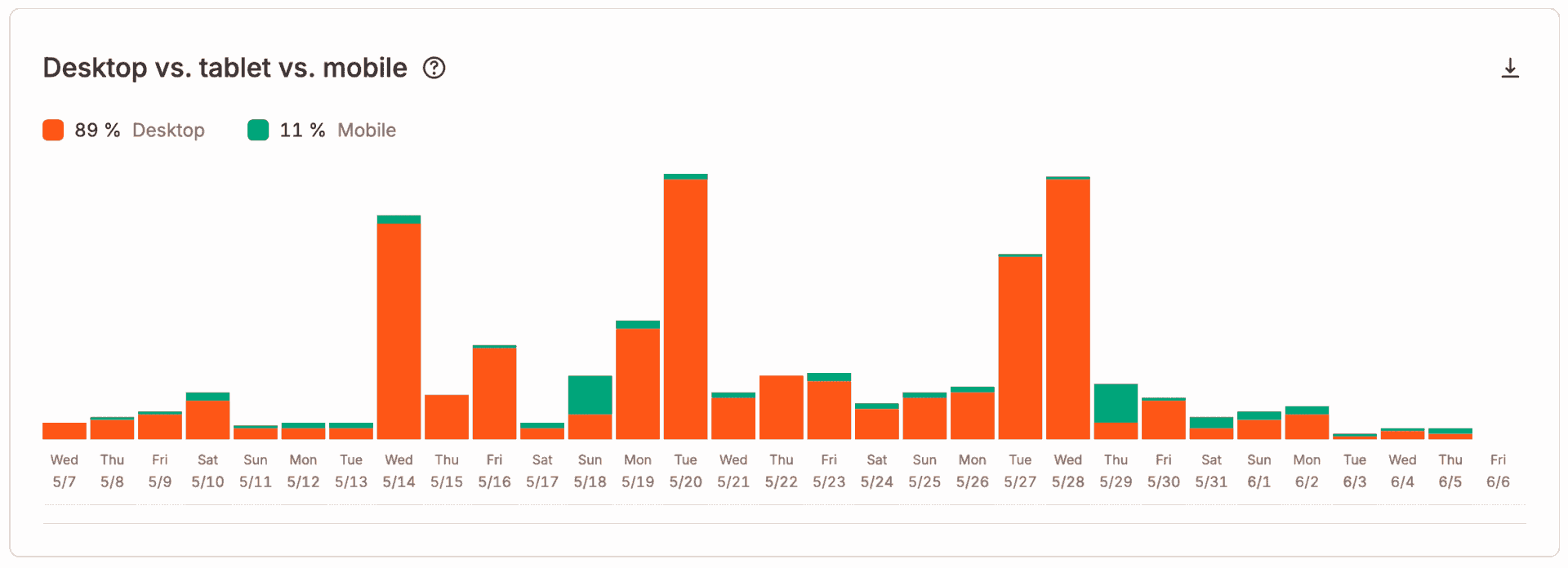

Tool range is some other robust indicator of human site visitors. Actual guests arrive the use of a large mixture of browsers, working techniques, connection speeds, and display sizes. Even concentrated geographic site visitors displays variation throughout units and configurations, making a distribution that hardly ever seems uniform.

MyKinsta supplies information on instrument use as neatly:

On the similar time, figuring out human site visitors isn’t all the time easy. Privateness protections, advert blockers, caching layers, and shared community environments can difficult to understand positive indicators or make other customers seem equivalent on the infrastructure point.

Because of this, site visitors classification works absolute best when a couple of signs, together with the ones habit patterns, consultation traits, instrument range, and interplay indicators we mentioned are evaluated in combination fairly than depending on any unmarried metric by myself.

Useful bots: Automation that helps your web site

Now not all automatic site visitors is one thing you wish to have to prevent. Many bots play an crucial position in retaining your site visual, monitored, and functioning accurately.

Seek engine crawlers

This is likely one of the maximum essential examples. Those bots systematically request pages to find new content material, evaluation adjustments, and replace seek indexes.

Their habit is generally structured and predictable, following hyperlinks methodically and respecting move slowly directives outlined in robots.txt. Fighting those crawlers from gaining access to your web site can cut back seek visibility and extend how temporarily new pages seem in effects.

Uptime displays and checking out services and products

Different reliable automation makes a speciality of tracking and operational well being. Uptime tracking gear, efficiency checkers, and artificial checking out services and products ship requests at common durations to substantiate availability, measure load occasions, and come across disasters early.

search engine optimization and validation gear

In a similar way, search engine optimization, accessibility, and validation gear scan pages to spot technical problems, damaged hyperlinks, or compliance issues that would in a different way move left out.

Useful bots typically make their presence transparent. They frequently determine themselves via constant consumer agent strings, function inside of outlined request limits, and practice revealed move slowly insurance policies.

As a result of those techniques fortify indexing, observability, and integrations, blockading them with out assessment can interrupt tracking workflows, cut back discoverability, or ruin services and products that rely on scheduled automatic requests.

Destructive bots: Site visitors that creates chance or waste

Destructive bots are automatic techniques designed to take advantage of web sites, extract information at scale, or devour infrastructure sources with out offering any reliable price. In contrast to useful automation, those bots generally try to cover their id, forget about move slowly regulations, and generate request patterns meant to circumvent elementary protections.

Credential-stuffing and brute-force bots

Those are a few of the maximum commonplace threats. Those techniques again and again goal login endpoints, checking out massive lists of stolen usernames and passwords in speedy succession in an try to achieve unauthorized get entry to. Even if unsuccessful, the quantity of requests can building up server load and sluggish reaction occasions for reliable customers.

Vulnerability scanners and scrapers

Different malicious automation makes a speciality of discovery and exploitation. Vulnerability scanners probe recognized directories, configuration recordsdata, and device endpoints to seek for old-fashioned parts or misconfigurations which may be exploited. Competitive scraping bots may additionally request massive volumes of pages or media recordsdata to replicate content material for republishing in different places, eating bandwidth and infrastructure capability within the procedure.

DDoS assaults

Some assaults intention purely at disruption fairly than get entry to. Site visitors-flooding and denial-of-service campaigns try to crush servers or utility layers with sustained request spikes, degrading efficiency or making services and products briefly unavailable.

Past its fast efficiency have an effect on, destructive bot site visitors can distort analytics and degrade the enjoy for genuine guests if left unmanaged.

How you can inform people, useful bots, and destructive bots aside

Distinguishing between genuine guests, useful automation, and destructive bots is dependent much less on any unmarried identifier and extra on spotting constant habit patterns throughout a couple of indicators.

When evaluated in combination, those signs assist you decide whether or not site visitors displays human job, reliable automation, or probably abusive requests.

Request frequency and timing

Human guests generate requests at abnormal durations as they learn, scroll, and navigate, whilst automatic techniques generally tend to request pages at extremely constant speeds or in speedy bursts that will be tough for an individual to duplicate. Extraordinarily excessive request charges from a unmarried supply or completely timed durations in most cases point out scripted job.

Consumer agent strings

Respectable bots generally determine themselves obviously and constantly, whilst destructive bots often rotate or spoof consumer brokers in an try to seem human. Evaluating consumer agent declarations with noticed habit is helping disclose inconsistencies that point out there’s automation.

IP recognition and community possession

Site visitors originating from recognized cloud website hosting networks, proxy services and products, or in the past flagged addresses might point out automatic techniques fairly than from genuine other people. Popularity databases and safety gear classify those networks in keeping with previous job and assist to spot suspicious resources extra temporarily.

Geographic distribution patterns

Unexpected will increase in site visitors from surprising areas, particularly when blended with an identical request habit, might recommend coordinated bot job fairly than authentic target market enlargement.

Appreciate for robots.txt and move slowly limits

If you happen to realize this, it’s a powerful indicator of reliable automation. Useful bots typically practice revealed move slowly insurance policies and function inside of cheap request limits, while destructive bots generally forget about those directives and proceed to request limited paths or recordsdata.

As a result of none of those indicators by myself supplies an entire resolution, efficient classification comes from examining a number of signs in combination. Over the years, those blended patterns create a competent image of whether or not incoming site visitors represents genuine customers, advisable automation, or job that calls for filtering or mitigation.

The place to investigate bot site visitors

Figuring out bot job calls for visibility throughout a number of layers of your website hosting and supply stack. No unmarried instrument displays the whole image, which is why combining analytics, logs, and safety dashboards produces way more dependable insights. Let’s check out every:

Analytics platforms supply a high-level start line

Site visitors spikes with out matching engagement, surprising geographic anomalies, or extraordinary instrument distributions frequently sign automatic job. Whilst analytics gear don’t all the time classify bots exactly, they assist illustrate patterns that sign a necessity for deeper investigation. Even easy plugins like Jetpack can help with this.

Server and get entry to logs be offering essentially the most detailed view of request habit

Logs disclose request frequency, reaction codes, consumer agent strings, IP addresses, and accessed paths, which allow you to to spot repeated scanning patterns, login assault makes an attempt, or scraping habit that will in a different way stay hidden in analytics information that’s all aggregated in combination.

CDN dashboards upload some other layer of visibility

CDN dashboards display site visitors patterns on the community edge ahead of requests succeed in your beginning server. Those dashboards frequently spotlight site visitors surges, regional anomalies, or repeated automatic requests which can be filtered or rate-limited upstream. This is helping you come across assaults a lot previous than you possibly can in a different way.

Firewalls and WAF gear supply real-time perception

Firewalls permit you to know about blocked, challenged, or suspicious requests in real-time. Reviewing firewall logs can disclose which site visitors resources are triggering safety regulations and whether or not changes are had to cut back false positives or tighten protections.

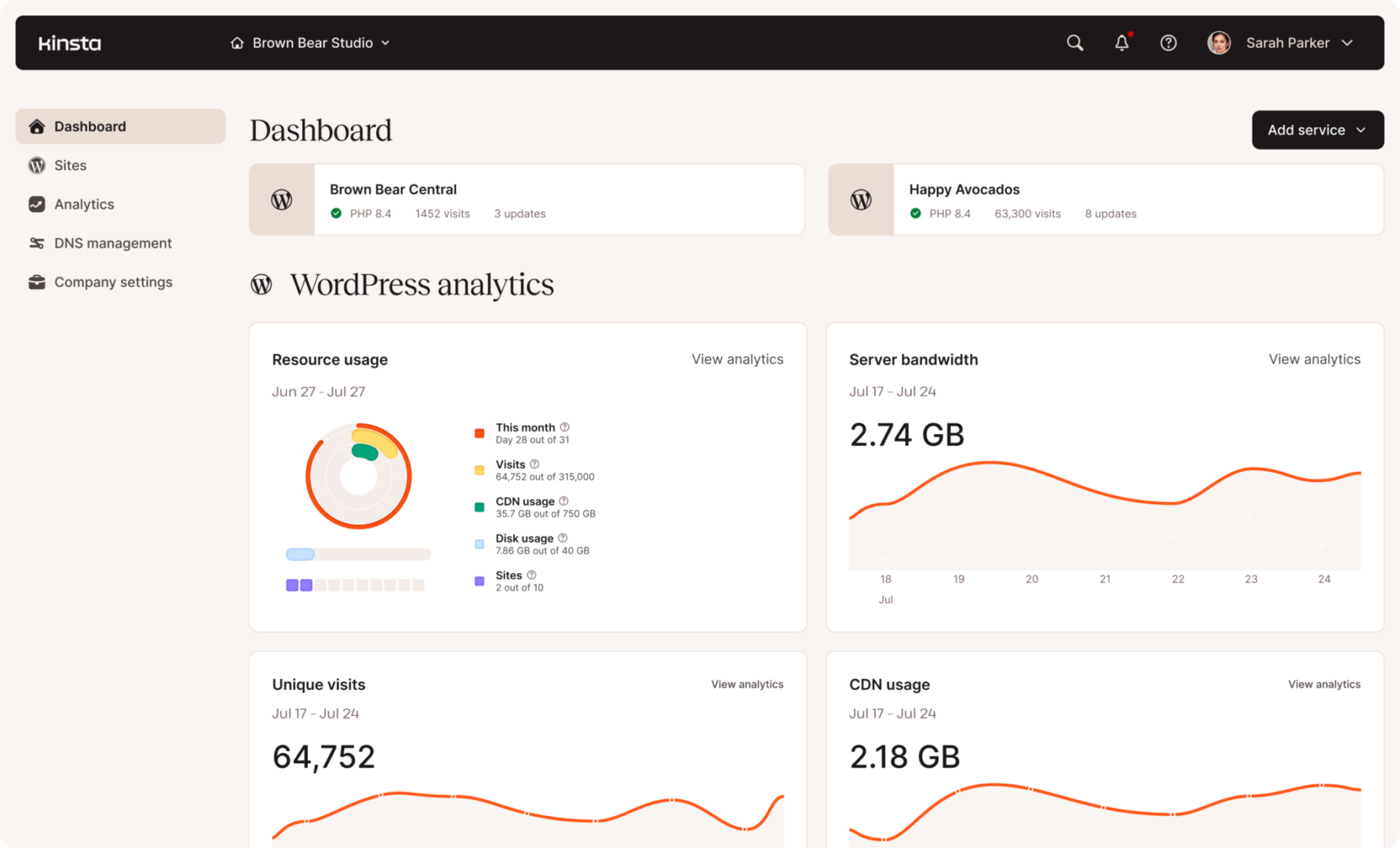

Controlled website hosting platforms simplify the method by way of consolidating a number of of those information resources. For instance, environments that combine CDN-level analytics, firewall tracking, and get entry to logs right into a unmarried dashboard assist you correlate suspicious habit throughout layers.

Internet hosting suppliers like Kinsta additionally spotlight site visitors analytics, efficiency tracking, and safety match information at once inside of their dashboard, MyKinsta. This implies you and your staff can analyze bot habit with no need to depend on a couple of exterior gear.

How bot site visitors distorts analytics and decision-making

When automatic requests combine with reliable visits, analytics information starts to replicate job that doesn’t constitute genuine target market passion. Pageviews and consultation counts might seem to upward push often even if exact engagement, conversions, or income stay unchanged. With out isolating automatic site visitors from human classes, you might interpret inflated site visitors numbers as enlargement and make strategic choices in keeping with deceptive indicators.

Engagement metrics grow to be particularly unreliable. Bots frequently generate classes with extraordinarily quick periods, fast exits, or repeated web page requests, which will artificially building up or lower jump fee and time-on-page measurements. In some instances, scraping bots again and again request explicit pages, developing the illusion that positive content material plays a long way higher than it in fact does amongst genuine customers.

Geographic, instrument, and referral information may additionally grow to be distorted. Computerized site visitors often originates from information facilities, proxy networks, or concentrated areas that don’t fit the web site’s exact buyer base. When those classes are integrated in reviews, advertising and marketing groups might put money into the fallacious areas, optimize for flawed instrument developments, or misread marketing campaign efficiency.

Over the years, those inaccuracies impact reporting, efficiency making plans, infrastructure scaling choices, and advertising and marketing investments. All of those attributes depend on site visitors analytics to expect call for. If a good portion of that site visitors is composed of automatic requests, companies chance overestimating enlargement, allocating sources inefficiently, or overlooking genuine consumer habit that calls for consideration.

Easiest practices for managing several types of site visitors

Managing trendy internet site visitors calls for a balanced way that protects web site efficiency with out interfering with reliable automation or genuine customers. Fairly than making an attempt to dam the rest that looks automatic, the objective is to use insurance policies that fit the habit and intent of every site visitors kind.

Prioritize genuine consumer enjoy

Optimize efficiency, availability, and accessibility so reliable guests can get entry to content material temporarily and reliably, even throughout site visitors spikes. Rapid load occasions, strong infrastructure, and resilient caching assist make sure that reliable customers don’t seem to be affected when automatic site visitors will increase. You’ll optimize for efficiency at once inside of Kinsta by way of the use of Kinsta API with Google PageSpeed Insights.

Permit and track useful automation

Seek engine crawlers, uptime displays, and validation gear will have to be explicitly allowed the place suitable so indexing, tracking, and integrations proceed to serve as accurately. Reviewing move slowly habit periodically is helping verify that reliable bots function inside of cheap limits.

Observe behavior-based protections to destructive site visitors

Price limits, safety demanding situations, and centered blockading regulations paintings absolute best when prompted by way of suspicious request patterns fairly than static assumptions about IP levels or consumer brokers. Behavioral controls cut back the possibility of blockading reliable services and products whilst nonetheless mitigating abusive job.

Assessment and modify insurance policies ceaselessly

Site visitors patterns exchange as websites develop, campaigns release, and new automatic techniques engage with content material. Periodic evaluations of firewall regulations, fee limits, and tracking indicators assist make sure that protections fit your present site visitors habit as a substitute of depending on old-fashioned assumptions.

Use site visitors supply data to make higher choices

Site visitors quantity by myself hardly ever tells the whole tale of ways a site plays. When human visits, useful automation, and destructive bot job are separated, analytics information turns into way more significant and actionable.

Blank site visitors segmentation permits groups to measure authentic target market enlargement, perceive genuine engagement patterns, and evaluation advertising and marketing efficiency with out automatic noise distorting the effects.

Extra correct site visitors classification additionally improves operational choices. Efficiency making plans, infrastructure scaling, and safety methods grow to be more uncomplicated to align with genuine call for when automatic requests are measured and controlled independently.

In case your present website hosting surroundings supplies restricted visibility into site visitors resources, it can be price comparing platforms that provide deeper site visitors intelligence and built-in bot control gear. Controlled environments like Kinsta supply integrated analytics, firewall protections, and edge-level site visitors insights that assist distinguish genuine customers from automatic job.

Kinsta’s more recent bandwidth-based website hosting plans additionally upload flexibility by way of extra intently pairing website hosting sources with exact site visitors intake. When you’ve got questions, you’ll communicate to our fortify staff anytime.

The submit How you can distinguish site visitors from bots to spot genuine visits, useful bots, and destructive assaults seemed first on Kinsta®.

WP Hosting