If you happen to’ve poked across the WordPress backend, you will have spotted a environment that claims “Discourage engines like google from indexing this web page” and questioned what it intended.

Or possibly you’re searching for a approach to cover your web page from undesirable guests and questioning if this one little checkbox is sufficient to stay your content material safely personal.

What does this feature imply? What precisely does it do for your web page? And why will have to you steer clear of depending on it — even though you’re seeking to cover your content material?

Listed here are the solutions and a couple of different tips on how to deindex your web page and block get right of entry to to positive pages.

What Does “Discourage Seek Engines from Indexing This Web page” Imply?

Have you ever ever questioned how engines like google index your web page and gauge your SEO? They do it with an automatic program referred to as a spider, sometimes called a robotic or crawler. Spiders “move slowly” the internet, visiting web pages and logging your whole content material.

Google makes use of them to make a decision how you can rank and position your site within the seek effects, take hold of blurbs out of your articles for the quest effects web page, and pull your pictures into Google Pictures.

Whilst you tick “Discourage engines like google from indexing this web page,” WordPress modifies your robots.txt file (a document that provides directions to spiders on how you can move slowly your web page). It could actually additionally upload a meta tag for your web page’s header that tells Google and different engines like google to not index any content material on all of your web page.

The important thing phrase this is “discourage”: Search engines like google and yahoo haven’t any legal responsibility to honor this request, particularly engines like google that don’t use the usual robots.txt syntax that Google does.

Internet crawlers will nonetheless be capable of in finding your web page, however correctly configured crawlers will learn your robots.txt and depart with out indexing the content material or appearing it of their seek effects.

Previously, this feature in WordPress didn’t prevent Google from appearing your site within the seek effects, simply from indexing its content material. It’s essential to nonetheless see your pages seem in seek effects with an error like “No data is to be had for this web page” or “An outline for this outcome isn’t to be had on account of the web page’s robots.txt.”

Whilst Google wasn’t indexing the web page, they didn’t cover the web page totally both. This anomaly resulted in other folks having the ability to discuss with pages they weren’t intended to peer. Due to WordPress 5.3, it now works correctly, blockading each indexing and list of the web page.

You’ll be able to believe how this may spoil your search engine optimization if you happen to enabled it unintentionally. It’s essential most effective to make use of this feature if you happen to actually don’t need any person to peer your content material — or even then, it is probably not the one measure you wish to have to take.

Why You Would possibly No longer Need to Index Your Web page

Internet sites are made to be observed by means of other folks. You need customers to learn your articles, purchase your merchandise, devour your content material — why would you deliberately attempt to block engines like google?

There are a couple of the explanation why chances are you’ll wish to cover section or your whole web page.

- Your web page is in construction and no longer in a position to be observed by means of the general public.

- You’re the usage of WordPress as a content material control device however wish to stay mentioned content material personal.

- You’re seeking to cover delicate data.

- You need your web page obtainable most effective to a small selection of other folks with a hyperlink or via invitations most effective, no longer via public seek pages.

- You need to place some content material at the back of a paywall or different gate, corresponding to newsletter-exclusive articles.

- You need to bring to an end visitors to previous, out of date articles.

- You need to stop getting SEO penalties on check pages or replica content material.

There are higher answers for a few of these — the usage of a right kind offline development server, setting your articles to private, or hanging them at the back of a password — however there are legit the explanation why chances are you’ll wish to deindex section or your whole web page.

The best way to Test if Your Web page Is Discouraging Seek Engines

Whilst you will have legit causes to deindex your web page, it may be a terrible surprise to be told that you just’ve grew to become this environment on with out that means to or left it on unintentionally. If you happen to’re getting zero traffic and suspect your web page isn’t being listed, right here’s how you can ascertain it.

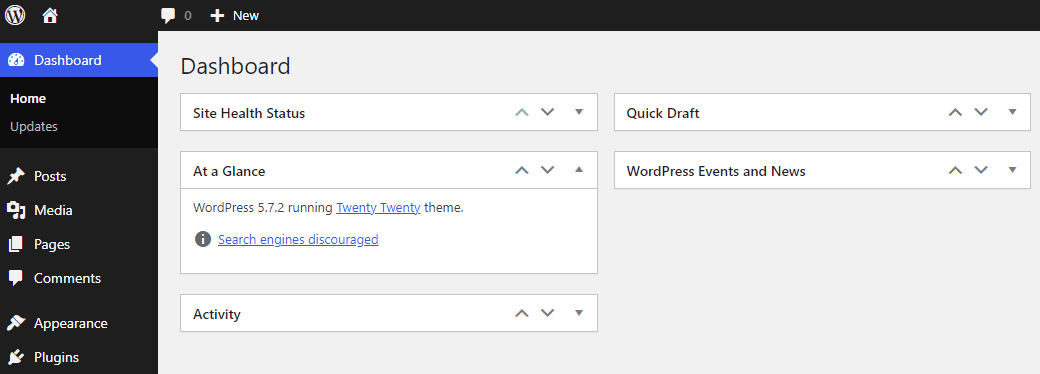

One simple approach is to test the At a Look field positioned at the house display of your admin dashboard. Simply log into your backend and test the field. If you happen to see “Seek Engines Discouraged,” then you already know you’ve activated that environment.

“At a Look” within the WordPress dashboard.

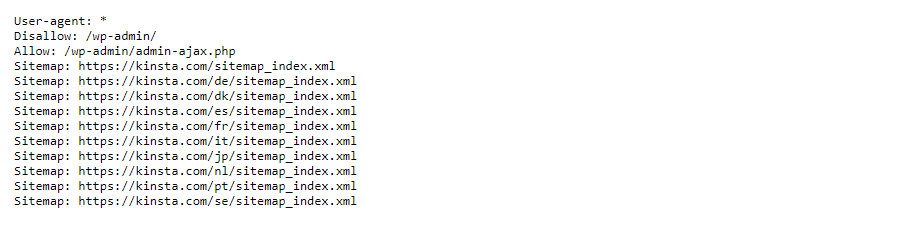

An much more dependable approach is to test your robots.txt. You’ll be able to simply examine this within the browser with out even logging into your web page.

To test robots.txt, all you want to do is upload /robots.txt to the top of your web page URL. For example: https://kinsta.com/robots.txt

If you happen to see Disallow: / then all of your web page is being blocked from indexing.

“Disallow” in robots.txt.

If you happen to see Disallow: adopted by means of a URL trail, like Disallow: /wp-admin/, it signifies that any URL with the /wp-admin/ trail is being blocked. This construction is standard for some pages, but when, as an example, it’s blockading /weblog/ which has pages you wish to have to be indexing, it might reason issues!

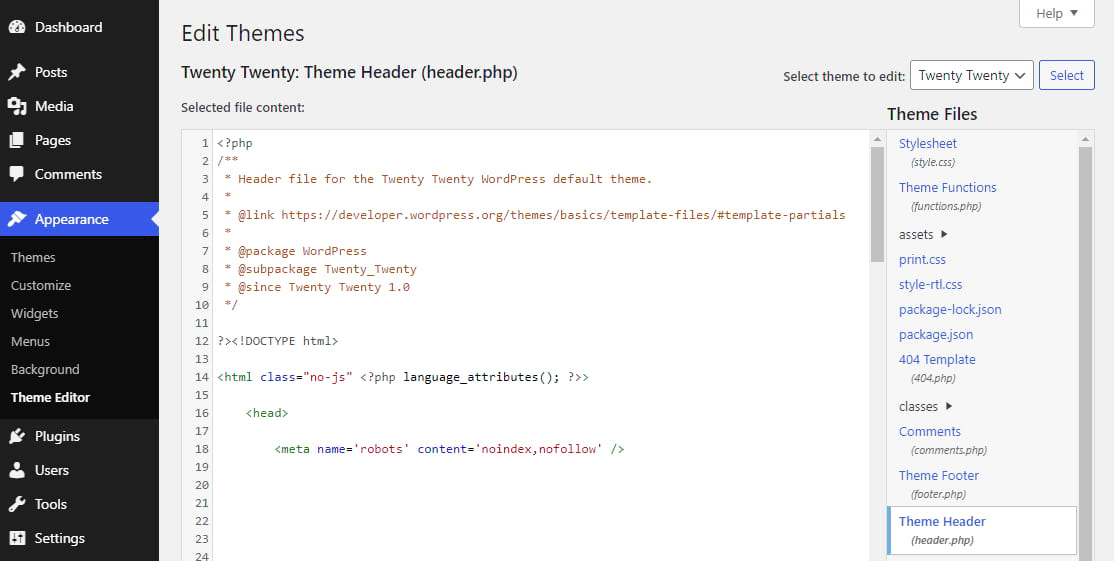

Now that WordPress makes use of meta tags fairly than robots.txt to deindex your web page, you will have to additionally check your header for changes.

Log in for your backend and cross to Look > Theme Editor. In finding Theme Header (header.php) and search for the next code:

noindex, nofollow in header.php.

You’ll be able to additionally test purposes.php for the noindex tag, because it’s conceivable to remotely insert code into the header via this document.

If you happen to in finding this code to your theme recordsdata, then your web page isn’t being listed by means of Google. However fairly than disposing of it manually, let’s attempt to flip off the unique environment first.

The best way to Discourage Seek Engine Indexing in WordPress

If you wish to skip the additional steps and cross immediately to the unique environment, right here’s how you can turn on or deactivate the “Discourage engines like google” possibility in WordPress.

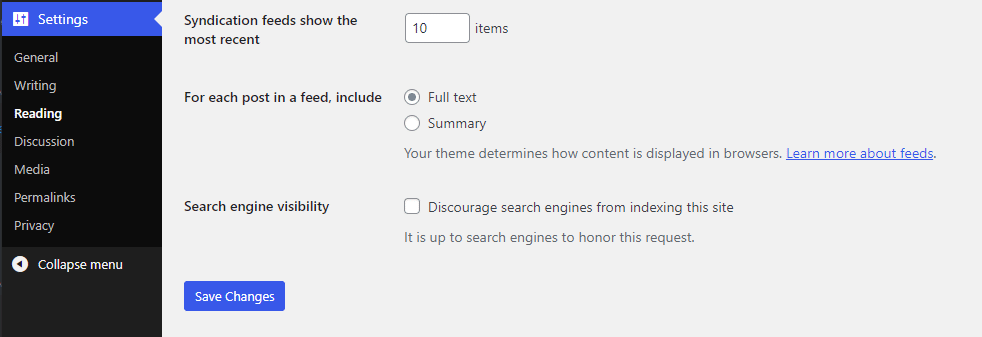

Log in for your WordPress dashboard and navigate to Settings > Studying. Search for the Seek Engine Visibility possibility with a checkbox categorised “Discourage engines like google from indexing this web page.”

Seek engine visibility checkbox.

If you happen to in finding that that is already on and need your web page to be listed, then uncheck it. If you happen to’re going to stop your web page from being listed, test it (and jot down a be aware someplace reminding you to show it off later!).

Now click on Save Adjustments, and also you’re just right to move. It is going to take a while to your web page to be reindexed or for it to be pulled from the quest effects.

In case your web page remains to be deindexed, you’ll be able to additionally take away the noindex code out of your header document, or manually edit robots.txt to take away the “Disallow” flag.

In order that’s easy sufficient, however what are some the explanation why you will have to steer clear of this feature, or a minimum of no longer depend totally on it?

Disadvantages of The usage of the Discourage Seek Engines Choice

It sort of feels easy — tick a checkbox and nobody will be capable of see your web page. Isn’t that just right sufficient? Why will have to you steer clear of the usage of this feature by itself?

Whilst you flip in this environment or any possibility love it, all it does is upload a tag for your header or your robots.txt. As proven by means of older variations of WordPress nonetheless permitting your web page to be indexed in seek effects, a small glitch or other error may end up in other folks seeing your supposedly hidden pages.

As well as, it’s totally as much as engines like google to honor the request to not move slowly your web page. Primary engines like google like Google and Bing typically will, however no longer all engines like google use the similar robots.txt syntax, and no longer all spiders crawling the internet are despatched out by means of search engines.

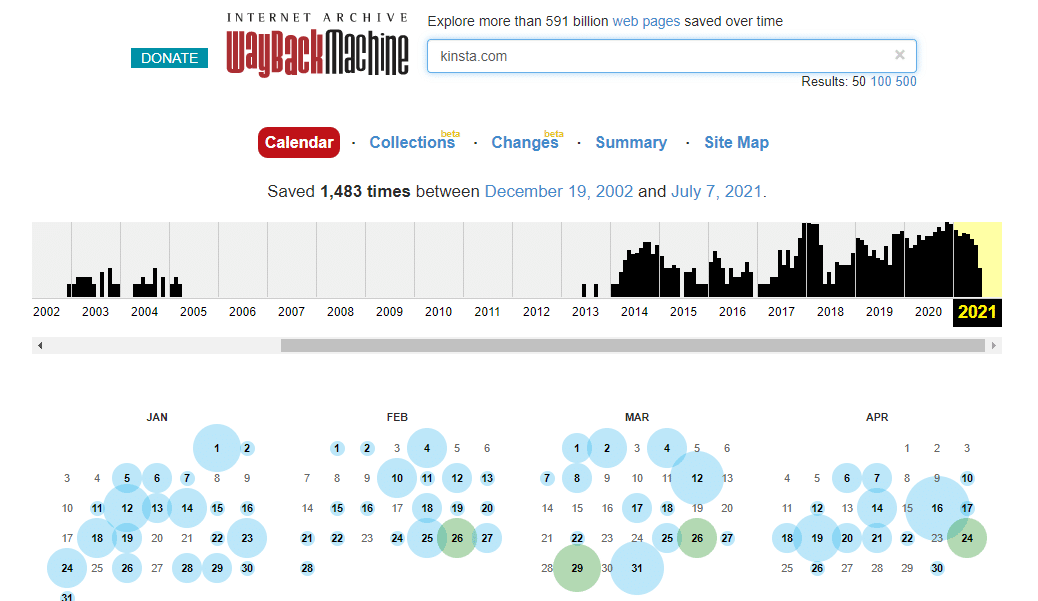

For example, one provider that uses internet crawlers is the Wayback Machine. And in case your content material is listed by means of this type of provider, it’s on the internet ceaselessly.

Wayback Gadget.

You might imagine simply because your emblem new web page has no hyperlinks to it that it’s protected from spiders, however that isn’t true. Current on a shared server, sending an e-mail with a hyperlink for your site, and even visiting your web page in a browser (particularly Chrome) might open your web page as much as being crawled.

Signal Up For the Publication

If you wish to cover content material, it’s simply no longer a good suggestion so as to add a parameter and hope it’ll do the trick.

And let’s be transparent, if the content material you’re deindexing is of a sensitive or personal nature, you will have to completely no longer depend on robots.txt or a meta tag to cover it.

Closing however no longer least, this feature will totally cover your web page from engines like google, whilst again and again you most effective wish to deindex positive pages.

So what will have to you be doing as an alternative of or along this system?

Different Tactics to Save you Seek Engine Indexing

Whilst the choice supplied by means of WordPress will typically do its process, for positive eventualities, it’s steadily higher to make use of different strategies of hiding content material. Even Google itself says don’t use robots.txt to hide pages.

So long as your web page has a domain name and is on a public-facing server, there’s no approach to ensure your content material received’t be observed or listed by means of crawlers until you delete it or cover it at the back of a password or login requirement.

That mentioned, what are higher techniques to cover your web page or positive pages on it?

Block Seek Engines with .htaccess

Whilst its implementation is functionally the similar as merely the usage of the “Discourage engines like google” possibility, chances are you’ll want to manually use .htaccess to dam indexing of your web page.

You’ll want to use an FTP/SFTP program to get right of entry to your web page and open the .htaccess file, typically positioned within the root folder (the primary folder you spot while you open your web page) or in public_html. Upload this code to the document and save:

Header set X-Robots-Tag "noindex, nofollow"Word: This system most effective works for Apache servers. NGINX servers, corresponding to the ones operating on Kinsta, will want to upload this code to the .conf document as an alternative, which can also be present in /and so on/nginx/ (you’ll be able to in finding an instance of meta tag implementation right here):

add_header X-Robots-Tag "noindex, nofollow";Password Give protection to Delicate Pages

If there are particular articles or pages you don’t need engines like google to index, the easiest way to cover them is to password protect your site. That approach, most effective you and the customers you wish to have will be capable of see that content material.

Want blazing-fast, dependable, and entirely protected internet hosting to your ecommerce site? Kinsta supplies all of this and 24/7 world-class strengthen from WooCommerce professionals. Check out our plans

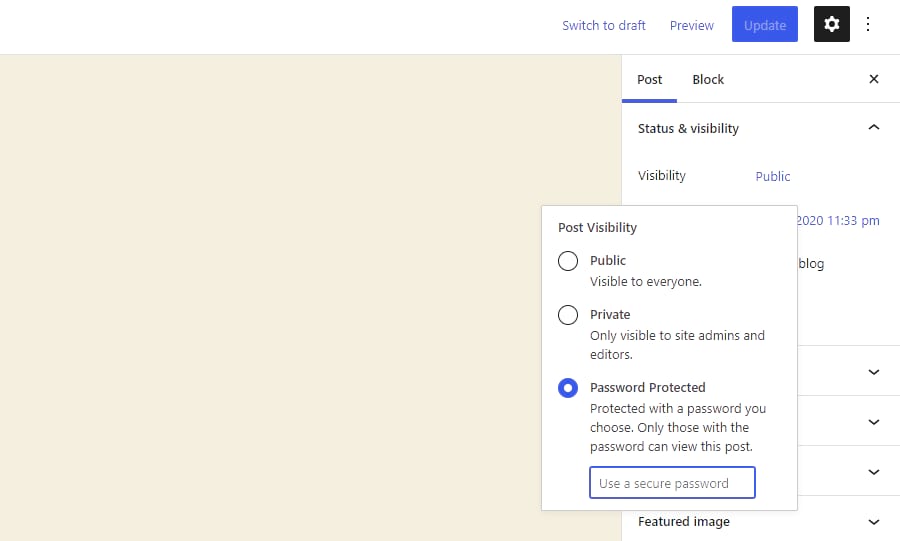

Fortunately, this capability is constructed into WordPress, so there’s no want to set up any plugins. Simply cross to Posts Pages and click on at the one you wish to have to cover. Edit your web page and search for the Standing and Visibility > Visibility menu at the right-hand aspect.

If you happen to’re no longer the usage of Gutenberg, the method is the same. You’ll be able to in finding the similar menu within the Put up field.

Trade the Visibility to Password Secure and input a password, then save — and your content material is now hidden from most of the people.

Environment a submit to Password Secure.

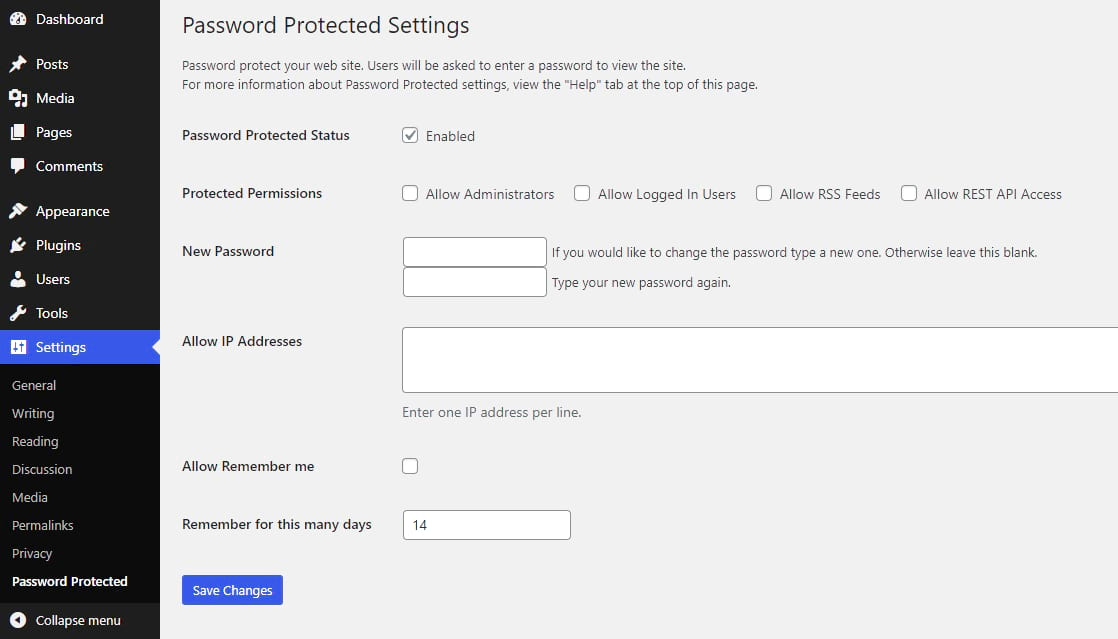

What if you wish to password give protection to all of your web page? It’s no longer sensible to require a password for each and every unmarried web page.

Kinsta customers are in success: You’ll be able to allow password coverage in Websites > Equipment, requiring each a username and password.

In a different way, you’ll be able to use a content restriction plugin (e.g. Password Protected). Please set up and turn on it, then head to Settings > Password Secure and allow Password Secure Standing. This offers you finer keep an eye on, even permitting you to whitelist positive IP addresses.

Environment a submit to Password Secure.

Set up a WordPress Plugin

When WordPress’ default capability isn’t sufficient, a just right plugin can steadily resolve your issues.

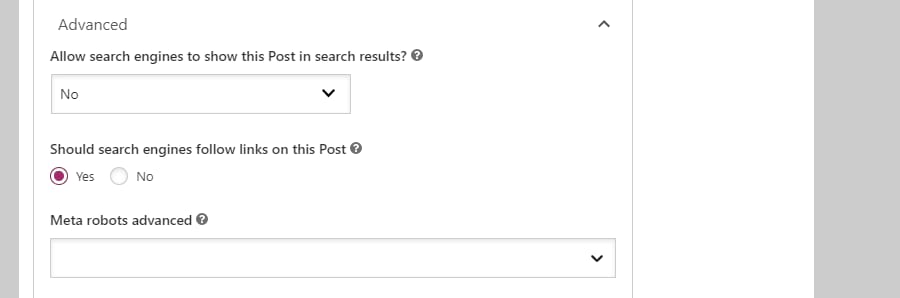

For example, if you wish to deindex explicit pages fairly than all of your web page, Yoast has this feature to be had.

In Yoast SEO, you’ll be able to open up a web page you wish to have to cover and search for the choice underneath the Complex tab: Permit engines like google to turn this Put up in seek effects? Trade it to No and the web page will likely be hidden.

Yoast search engine optimization settings

You will have to be aware that either one of those depend at the similar strategies as WordPress’ default method to discourage seek engine indexing, and are topic to the similar flaws. Some engines like google would possibly not honor your request. You’ll want to make use of different strategies if you happen to actually wish to cover this content material utterly.

Any other resolution is to paywall your content material or cover it at the back of a required login. The Simple Membership or Ultimate Member plugins let you arrange loose or paid club content material.

Easy Club plugin.

Use a Staging Web page for Trying out

When running on check initiatives or in-progress web pages, your absolute best wager on protecting them hidden is to make use of a staging or construction web page. Those web pages are personal, steadily hosted on an area system that nobody however you and others you’ve allowed can get right of entry to.

Many internet hosts will give you easy-to-deploy staging websites and assist you to push them to your public server while you’re in a position. Kinsta gives a one-click staging site for all plans.

You’ll be able to get right of entry to your staging websites in MyKinsta by means of going to Websites > Information and clicking the Trade atmosphere dropdown. Click on the Staging atmosphere after which the Create a staging atmosphere button. In a couple of mins, your construction server will likely be up and in a position for checking out.

If you happen to don’t have get right of entry to to a very simple approach to create a staging web page, the WP STAGING plugin let you replica your set up and transfer it right into a folder for simple get right of entry to.

Use Google Seek Console to Briefly Disguise Internet sites

Google Search Console is a provider that permits you to declare possession of your web pages. With this comes the facility to dam Google from indexing positive pages quickly.

This system has a few issues: It’s Google-exclusive (so websites like Bing might not be affected) and it most effective lasts 6 months.

But when you wish to have a handy guide a rough and clean approach to get your content material out of Google seek effects quickly, that is how to do it.

If you happen to haven’t already, you’ll want to add your site to Google Search Console. With that executed, open Removals and make a choice Transient Removals > New Request. Then click on Take away this URL most effective and hyperlink the web page you wish to have to cover.

That is an much more dependable approach to block content material, however once more, it really works solely for Google and most effective lasts 6 months.

Abstract

There are lots of the explanation why chances are you’ll wish to cover content material for your web page, however depending at the “Discourage engines like google from indexing this web page” possibility isn’t the easiest way to verify such content material isn’t observed.

Until you wish to have to cover all of your site from the internet, you will have to by no means click on this feature, as it could actually do massive injury for your search engine optimization if it’s unintentionally toggled.

And even though you do wish to cover your web page, this default possibility is an unreliable means. It will have to be paired with password coverage or different blockading, particularly if you happen to’re coping with delicate content material.

Do you utilize another tips on how to cover your web page or portions of it? Tell us within the feedback phase.

The submit Discourage Search Engines from Indexing This Site: What It Means and How to Use It seemed first on Kinsta.

WP Hosting