Operating native LLMs will get dear rapid, no longer simply in cash, however in time.

You discover a fashion that appears promising, pull it into Ollama or llama.cpp, then know it is just too gradual, too massive, or simply unsuitable in your gadget. By the point you work that out, you could have already wasted bandwidth, garage, and a bit of your afternoon.

That’s the drawback llmfit is constructed to unravel.

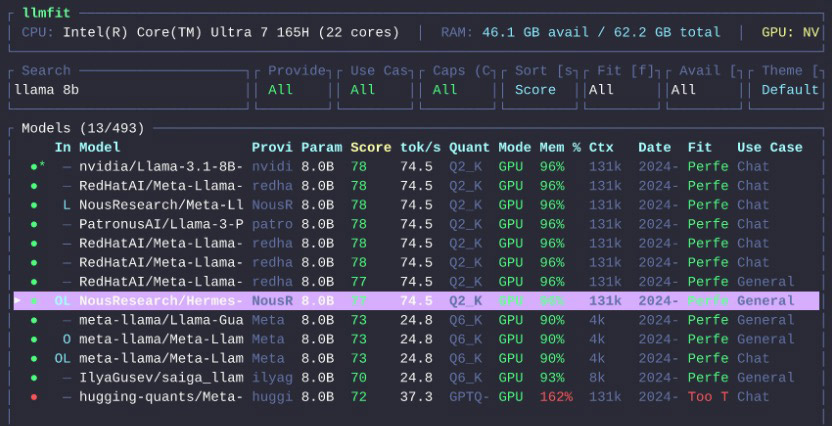

Created by means of Alex Jones, llmfit is a terminal device that tests your {hardware}, compares it towards masses of fashions, and recommends those which can be if truth be told sensible in your setup. As an alternative of guessing whether or not a fashion will are compatible into RAM or VRAM, it ranks choices by means of are compatible, velocity, high quality, and context so you’ll be able to make a wiser selection earlier than downloading the rest.

Should you run native fashions ceaselessly, that is a kind of equipment that feels instantly smart.

Contents

What’s llmfit?

llmfit is an area fashion advice device for individuals who run LLMs on their very own {hardware}.

It detects your gadget specifications, together with RAM, CPU, and GPU, then ranks fashions in response to what your device can realistically care for. It helps each an interactive terminal UI and a regular CLI mode, so you’ll be able to browse visually or script it into your workflow.

Consistent with the undertaking README, it really works with native runtime suppliers similar to Ollama, llama.cpp, MLX, Docker Type Runner, and LM Studio.

In undeniable English, llmfit solutions one very sensible query:

Which LLM will have to I run in this gadget?

What does llmfit do?

At its core, llmfit is helping you prevent guessing.

It may possibly:

- locate your CPU, RAM, GPU, and to be had VRAM

- take a look at fashion dimension and quantization choices

- estimate which fashions will run neatly, slightly run, or no longer are compatible in any respect

- counsel fashions by means of use case, similar to coding, chat, reasoning, or embeddings

- simulate {hardware} setups, so you’ll be able to check imaginary builds earlier than upgrading or purchasing the rest

- estimate what {hardware} a selected fashion would wish

That closing section issues greater than it sounds.

Maximum native AI equipment let you know what exists. llmfit tries to let you know what’s sensible.

Why llmfit Is Helpful

There are already quite a lot of puts to browse fashions.

What’s normally lacking is a transparent solution as to whether a fashion is sensible for your gadget.

A 7B fashion may technically run, but when it crawls, that’s not a lot use. A quantized fashion may squeeze into reminiscence, however go away too little headroom for the context duration you wish to have. llmfit tries to bridge that hole by means of combining {hardware} detection, fashion scoring, and runtime consciousness.

If you’re new to working native fashions, putting in place an area LLM launcher is an invaluable first step earlier than narrowing down the fitting are compatible with llmfit. The device turns out to be useful for a couple of other varieties of customers:

- other folks new to native LLMs who have no idea the place to begin

- builders evaluating Ollama, MLX, or llama.cpp setups

- any individual making plans an improve and short of to grasp what extra RAM or VRAM would free up

- groups working native AI throughout other machines and wanting a handy guide a rough method to evaluate are compatible

Easy methods to Set up llmfit

The undertaking provides a couple of set up choices.

Homebrew

If you’re on macOS or Linux with Homebrew:

brew set up llmfitMacPorts

Should you use MacPorts:

port set up llmfitHome windows with Scoop

scoop set up llmfitFast Set up Script

For macOS or Linux, the undertaking additionally supplies an set up script:

curl -fsSL https://llmfit.axjns.dev/set up.sh | shIf you wish to have a user-local set up with out sudo:

curl -fsSL https://llmfit.axjns.dev/set up.sh | sh -s -- --localDocker

You’ll be able to additionally run it with Docker:

docker run ghcr.io/alexsjones/llmfitConstruct from Supply

Should you want development it your self:

git clone https://github.com/AlexsJones/llmfit.git

cd llmfit

shipment construct --releaseThe binary can be to be had at:

goal/launch/llmfitEasy methods to Use llmfit

One of the best ways to begin is to only run it.

llmfitThat launches the interactive terminal UI.

Within the interface, llmfit displays your detected {hardware} on the best and a ranked listing of fashions beneath it. You’ll be able to seek, filter out, evaluate, and type fashions with out leaving the app.

A number of helpful keys from the undertaking documentation:

j/okayor arrow keys to transport via fashions/to go lookingfto filter out by means of are compatible stagesto switch type orderpto open {hardware} making plans modeSto simulate other {hardware}dto obtain the chosen fashionInputto open fashion main pointsqto surrender

Should you want command-line output as an alternative of the TUI, use CLI mode:

llmfit --cliListed here are a couple of instructions price realizing.

Display Your Detected Machine Specifications

llmfit deviceChecklist All Recognized Fashions

llmfit listingSeek for a Type

llmfit seek "llama 8b"Get Suggestions in JSON

llmfit suggest --json --limit 5Get Coding-Centered Suggestions

llmfit suggest --json --use-case coding --limit 3Estimate {Hardware} Wanted for a Explicit Type

llmfit plan "Qwen/Qwen3-4B-MLX-4bit" --context 8192Options Price Calling Out

{Hardware} Simulation

This is without doubt one of the smarter portions of the device.

Within the TUI, urgent S opens simulation mode, the place you’ll be able to override RAM, VRAM, and CPU core depend. That allows you to solution questions like:

- What if I improve from 16GB to 32GB RAM?

- What if I transfer this workload to a gadget with extra VRAM?

- What may just I run on a smaller goal gadget?

This is a sensible method to plan {hardware} with out leaving the app or doing the mathematics manually.

Making plans Mode

Making plans mode flips the standard query round.

As an alternative of asking what suits your present gadget, it asks what {hardware} a selected fashion would wish. That turns out to be useful while you already know the fashion you wish to have and want a fast sense of whether or not your gadget can run it very easily.

Internet Dashboard and API

llmfit isn’t restricted to an interactive terminal.

It may possibly additionally get started a internet dashboard, and it features a REST API via llmfit serve. That makes it extra helpful for scripting, automation, or folding into a bigger native AI setup.

Who Will have to Use llmfit?

llmfit makes essentially the most sense for:

- builders who run native LLMs incessantly

- other folks opting for between Ollama, MLX, and llama.cpp

- any individual uninterested in trial-and-error fashion downloads

- {hardware} tinkerers making plans a RAM or GPU improve

- groups that need rapid suggestions for various machines

Should you simplest run one or two fashions and already know what works for your device, you won’t want it.

However if you happen to experiment ceaselessly, evaluate runtimes, or stay asking, “will this fashion if truth be told run neatly right here?”, llmfit begins having a look in truth helpful.

Ultimate Ideas

llmfit isn’t any other fashion launcher.

It’s nearer to a are compatible marketing consultant for native LLMs.

That sounds modest, nevertheless it solves an actual drawback. Native AI is stuffed with fashion lists, leaderboards, and obtain buttons. What the general public want first is a sooner method to slim that listing to fashions that if truth be told make sense on their gadget.

This is precisely the place llmfit seems to be helpful.

Set up it, let it investigate cross-check your {hardware}, and notice what it recommends earlier than downloading your subsequent fashion.

The put up llmfit Is helping You Select the Proper Native LLM for Your Gadget seemed first on Hongkiat.

WordPress Website Development Source: https://www.hongkiat.com/blog/llmfit-local-llm-guide/