For many entrepreneurs, consistent updates are had to stay their web page contemporary and make stronger their search engine marketing scores.

Then again, some websites have loads and even 1000’s of pages, making it a problem for groups that manually push the updates to engines like google. If the content material is being up to date so steadily, how can groups make certain that those enhancements are impacting their search engine marketing scores?

That’s the place crawler bots come into play. A internet crawler bot will scrape your sitemap for brand spanking new updates and index the content material into engines like google.

On this submit, we’ll define a complete crawler checklist that covers the entire internet crawler bots you wish to have to understand. Sooner than we dive in, let’s outline internet crawler bots and display how they serve as.

What Is a Internet Crawler?

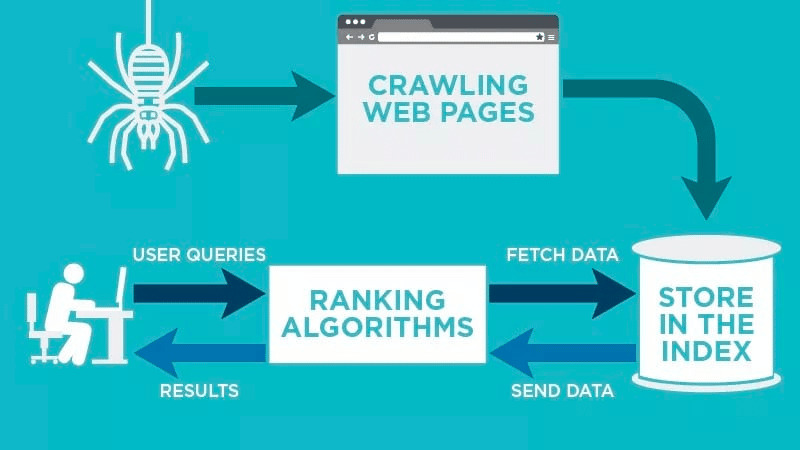

A internet crawler is a pc program that robotically scans and systematically reads internet pages to index the pages for engines like google. Internet crawlers are sometimes called spiders or bots.

For engines like google to provide up-to-date, related internet pages to customers starting up a seek, a move slowly from a internet crawler bot should happen. This procedure can on occasion occur robotically (relying on each the crawler’s and your web page’s settings), or it may be initiated at once.

Many components have an effect on your pages’ search engine marketing rating, together with relevancy, inbound links, internet internet hosting, and extra. Then again, none of those topic in case your pages aren’t being crawled and listed by way of engines like google. For this reason it’s so essential to make certain that your web page is permitting the proper crawls to happen and getting rid of any boundaries of their method.

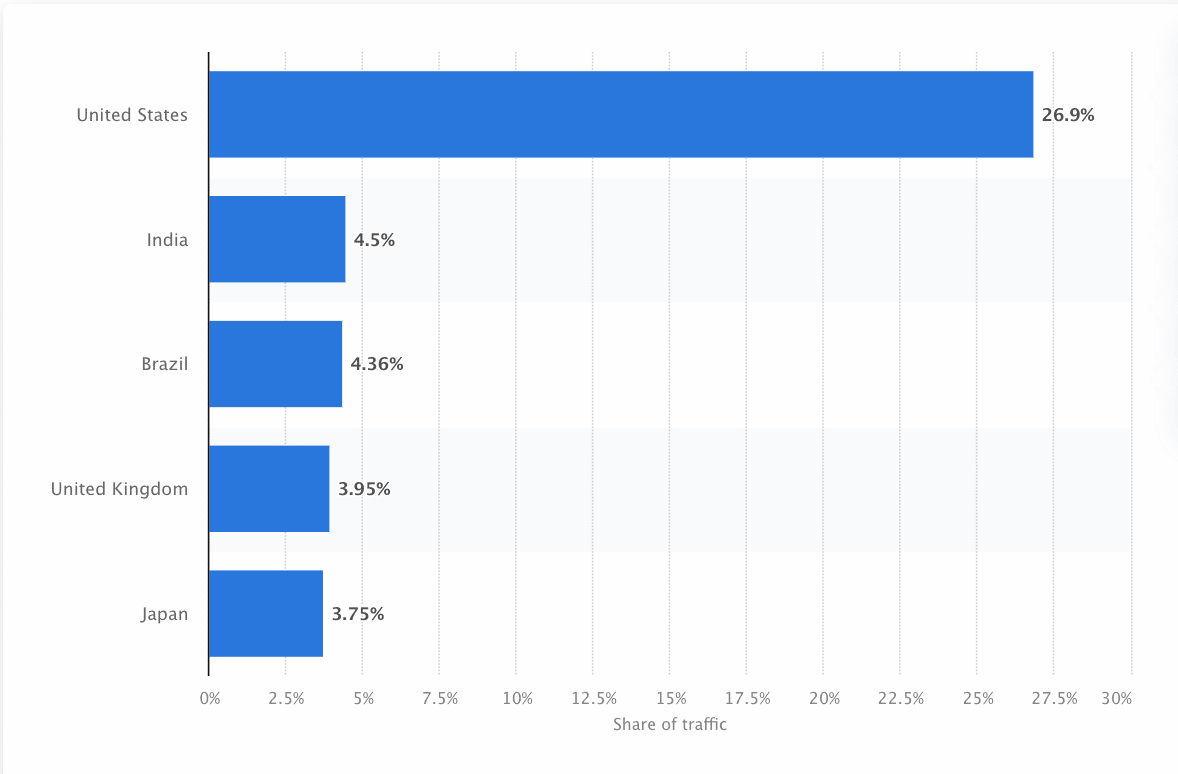

Bots should frequently scan and scrape the internet to verify probably the most correct knowledge is gifted. Google is probably the most visited site in the US, and roughly 26.9% of searches come from American customers:

Then again, there isn’t one internet crawler that crawls for each seek engine. Each and every seek engine has distinctive strengths, so builders and entrepreneurs on occasion collect a “crawler checklist.” This crawler checklist is helping them determine other crawlers of their web page log to simply accept or block.

Entrepreneurs wish to collect a crawler checklist filled with the other internet crawlers and know how they review their web page (not like content material scrapers that thieve the content material) to make certain that they optimize their touchdown pages as it should be for engines like google.

How Does a Internet Crawler Paintings?

A internet crawler will robotically scan your internet web page after it’s printed and index your information.

Internet crawlers search for particular key phrases related to the internet web page and index that knowledge for related engines like google like Google, Bing, and extra.

Algorithms for the major search engines will fetch that information when a person submits an inquiry for the related key phrase this is tied to it.

Crawls get started with identified URLs. Those are established internet pages with more than a few indicators that direct internet crawlers to these pages. Those indicators may well be:

- One-way links: The selection of occasions a web page hyperlinks to it

- Guests: How a lot visitors is heading to that web page

- Area Authority: The entire high quality of the area

Then, they retailer the information within the seek engine’s index. Because the person initiates a seek question, the set of rules will fetch the information from the index, and it’s going to seem at the seek engine effects web page. This procedure can happen inside of a couple of milliseconds, which is why effects steadily seem temporarily.

As a webmaster, you’ll be able to keep watch over which bots move slowly your web page. That’s why it’s essential to have a crawler checklist. It’s the robots.txt protocol that lives inside of every web page’s servers that directs crawlers to new content material that must be listed.

Relying on what you enter into your robots.txt protocol on every internet web page, you’ll be able to inform a crawler to scan or keep away from indexing that web page sooner or later.

By means of figuring out what a internet crawler seems to be for in its scan, you’ll be able to know how to higher place your content material for engines like google.

Compiling Your Crawler Listing: What Are the Other Varieties of Internet Crawlers?

As you begin to take into accounts compiling your crawler checklist, there are 3 primary varieties of crawlers to search for. Those come with:

- In-house Crawlers: Those are crawlers designed by way of an organization’s building workforce to scan its web page. Usually they’re used for web page auditing and optimization.

- Industrial Crawlers: Those are custom-built crawlers like Screaming Frog that businesses can use to move slowly and successfully review their content material.

- Open-Supply Crawlers: Those are free-to-use crawlers which can be constructed by way of a lot of builders and hackers around the globe.

It’s essential to know the various kinds of crawlers that exist so you realize which sort you wish to have to leverage in your personal industry objectives.

The 11 Maximum Commonplace Internet Crawlers to Upload to Your Crawler Listing

There isn’t one crawler that does the entire paintings for each seek engine.

As a substitute, there are a selection of internet crawlers that review your internet pages and scan the content material for the entire engines like google to be had to customers around the globe.

Let’s have a look at probably the most maximum not unusual internet crawlers lately.

1. Googlebot

Googlebot is Google’s generic internet crawler this is accountable for crawling websites that may display up on Google’s seek engine.

Even though there are technically two variations of Googlebot—Googlebot Desktop and Googlebot Smartphone (Cell)—most pros believe Googlebot one singular crawler.

It’s because each practice the similar distinctive product token (referred to as a person agent token) written in every web page’s robots.txt. The Googlebot person agent is just “Googlebot.”

Googlebot is going to paintings and in most cases accesses your web page each few seconds (until you’ve blocked it to your web page’s robots.txt). A backup of the scanned pages is stored in a unified database known as Google Cache. This permits you to have a look at outdated variations of your web page.

As well as, Google Seek Console may be some other device site owners use to know how Googlebot is crawling their web page and to optimize their pages for seek.

2. Bingbot

Bingbot used to be created in 2010 by way of Microsoft to scan and index URLs to make certain that Bing gives related, up-to-date seek engine effects for the platform’s customers.

Just like Googlebot, builders or entrepreneurs can outline of their robots.txt on their web page whether they approve or deny the agent identifier “bingbot” to scan their web page.

As well as, they be capable to distinguish between mobile-first indexing crawlers and desktop crawlers since Bingbot not too long ago switched to a brand new agent kind. This, along side Bing Webmaster Equipment, supplies site owners with higher flexibility to turn how their web page is found out and showcased in seek effects.

3. Yandex Bot

Yandex Bot is a crawler in particular for the Russian seek engine, Yandex. This is likely one of the biggest and most well liked engines like google in Russia.

Site owners could make their web page pages out there to Yandex Bot thru their robots.txt document.

As well as, they might additionally upload a Yandex.Metrica tag to express pages, reindex pages within the Yandex Webmaster or factor an IndexNow protocol, a singular record that issues out new, changed, or deactivated pages.

4. Apple Bot

Apple commissioned the Apple Bot to move slowly and index webpages for Apple’s Siri and Highlight Ideas.

Apple Bot considers more than one components when deciding which content material to raise in Siri and Highlight Ideas. Those components come with person engagement, the relevance of seek phrases, quantity/high quality of hyperlinks, location-based indicators, or even webpage design.

5. DuckDuck Bot

The DuckDuckBot is the internet crawler for DuckDuckGo, which gives “Seamless privateness coverage for your internet browser.”

Site owners can use the DuckDuckBot API to peer if the DuckDuck Bot has crawled their web page. Because it crawls, it updates the DuckDuckBot API database with contemporary IP addresses and person brokers.

This is helping site owners determine any imposters or malicious bots looking to be related to DuckDuck Bot.

6. Baidu Spider

Baidu is the main Chinese language seek engine, and the Baidu Spider is the web page’s sole crawler.

Google is banned in China, so it’s essential to allow the Baidu Spider to move slowly your web page if you wish to succeed in the Chinese language marketplace.

To spot the Baidu Spider crawling your web page, search for the next person brokers: baiduspider, baiduspider-image, baiduspider-video, and extra.

In the event you’re now not doing industry in China, it should make sense to dam the Baidu Spider to your robots.txt script. This may increasingly save you the Baidu Spider from crawling your web page, thereby getting rid of any probability of your pages showing on Baidu’s seek engine effects pages (SERPs).

7. Sogou Spider

Sogou is a Chinese language seek engine this is reportedly the primary seek engine with 10 billion Chinese language pages listed.

In the event you’re doing industry within the Chinese language marketplace, that is some other widespread seek engine crawler you wish to have to find out about. The Sogou Spider follows the robotic’s exclusion textual content and move slowly extend parameters.

As with the Baidu Spider, if you happen to don’t wish to do industry within the Chinese language marketplace, you must disable this spider to forestall sluggish web page load occasions.

8. Fb Exterior Hit

Fb Exterior Hit, in a different way referred to as the Fb Crawler, crawls the HTML of an app or site shared on Fb.

This permits the social platform to generate a sharable preview of every hyperlink posted at the platform. The name, description, and thumbnail picture seem due to the crawler.

If the move slowly isn’t finished inside of seconds, Fb won’t display the content material within the tradition snippet generated earlier than sharing.

9. Exabot

Exalead is a tool corporate created in 2000 and headquartered in Paris, France. The corporate supplies seek platforms for client and venture purchasers.

Exabot is the crawler for his or her core seek engine constructed on their CloudView product.

Like maximum engines like google, Exalead components in each backlinking and the content material on internet pages when rating. Exabot is the person agent of Exalead’s robotic. The robotic creates a “primary index” which compiles the consequences that the quest engine customers will see.

10. Swiftbot

Swiftype is a tradition seek engine in your site. It combines “the most productive seek era, algorithms, content material ingestion framework, purchasers, and analytics equipment.”

When you have a fancy web page with many pages, Swiftype gives an invaluable interface to catalog and index all of your pages for you.

Swiftbot is Swiftype’s internet crawler. Then again, not like different bots, Swiftbot simplest crawls websites that their consumers request.

11. Slurp Bot

Slurp Bot is the Yahoo seek robotic that crawls and indexes pages for Yahoo.

This move slowly is very important for each Yahoo.com in addition to its spouse websites together with Yahoo Information, Yahoo Finance, and Yahoo Sports activities. With out it, related web page listings wouldn’t seem.

The listed content material contributes to a extra customized internet enjoy for customers with extra related effects.

The 8 Industrial Crawlers search engine marketing Pros Wish to Know

Now that you’ve got 11 of the preferred bots for your crawler checklist, let’s have a look at probably the most not unusual industrial crawlers and search engine marketing equipment for execs.

1. Ahrefs Bot

The Ahrefs Bot is a internet crawler that compiles and indexes the 12 trillion hyperlink database that widespread search engine marketing tool, Ahrefs, gives.

The Ahrefs Bot visits 6 billion web sites on a daily basis and is thought of as “the second one maximum energetic crawler” in the back of simplest Googlebot.

Just like different bots, the Ahrefs Bot follows robots.txt purposes, in addition to lets in/disallows laws in every web page’s code.

2. Semrush Bot

The Semrush Bot permits Semrush, a number one search engine marketing tool, to assemble and index web page information for its consumers’ use on its platform.

The information is utilized in Semrush’s public back-link seek engine, the web page audit device, the back-link audit device, hyperlink construction device, and writing assistant.

It crawls your web page by way of compiling an inventory of internet web page URLs, visiting them, and saving positive links for long term visits.

3. Moz’s Marketing campaign Crawler Rogerbot

Rogerbot is the crawler for the main search engine marketing web page, Moz. This crawler is in particular amassing content material for Moz Professional Marketing campaign web page audits.

Rogerbot follows all laws set forth in robots.txt recordsdata, so you’ll be able to make a decision if you wish to block/permit Rogerbot from scanning your web page.

Site owners won’t be able to seek for a static IP deal with to peer which pages Rogerbot has crawled because of its multifaceted method.

4. Screaming Frog

Screaming Frog is a crawler that search engine marketing execs use to audit their very own web page and determine spaces of development that may have an effect on their seek engine scores.

As soon as a move slowly is initiated, you’ll be able to overview real-time information and determine damaged hyperlinks or enhancements which can be wanted in your web page titles, metadata, robots, replica content material, and extra.

With the intention to configure the move slowly parameters, you should acquire a Screaming Frog license.

5. Lumar (previously Deep Move slowly)

Lumar is a “centralized command heart for keeping up your web page’s technical well being.” With this platform, you’ll be able to start up a move slowly of your web page that can assist you plan your web page structure.

Lumar prides itself because the “quickest site crawler in the marketplace” and boasts that it might probably move slowly as much as 450 URLs consistent with 2d.

6. Majestic

Majestic basically specializes in monitoring and figuring out inbound links on URLs.

The corporate prides itself on having “one of the vital complete resources of back-link information at the Web,” highlighting its historical index which has greater from 5 to fifteen years of hyperlinks in 2021.

The web page’s crawler makes all of this information to be had to the corporate’s consumers.

7. cognitiveSEO

cognitiveSEO is some other essential search engine marketing tool that many execs use.

The cognitiveSEO crawler permits customers to accomplish complete web page audits that may tell their web page structure and overarching search engine marketing technique.

The bot will move slowly all pages and supply “a completely custom designed set of information” this is distinctive for the tip person. This information set may even have suggestions for the person on how they are able to make stronger their web page for different crawlers—each to have an effect on scores and block crawlers which can be pointless.

8. Oncrawl

Oncrawl is an “industry-leading search engine marketing crawler and log analyzer” for enterprise-level purchasers.

Customers can arrange “move slowly profiles” to create particular parameters for the move slowly. You’ll save those settings (together with the beginning URL, move slowly limits, most move slowly pace, and extra) to simply run the move slowly once more beneath the similar established parameters.

Do I Wish to Offer protection to My Website online from Malicious Internet Crawlers?

No longer all crawlers are just right. Some would possibly negatively have an effect on your web page pace, whilst others would possibly attempt to hack your web page or have malicious intentions.

That’s why it’s essential to know how to dam crawlers from coming into your web page.

By means of setting up a crawler checklist, you’ll know which crawlers are the nice ones to appear out for. Then, you’ll be able to weed throughout the fishy ones and upload them to your block checklist.

How To Block Malicious Internet Crawlers

Along with your crawler checklist in hand, you’ll have the ability to determine which bots you need to approve and which of them you wish to have to dam.

Step one is to head thru your crawler checklist and outline the person agent and entire agent string this is related to every crawler in addition to its particular IP deal with. Those are key figuring out components which can be related to every bot.

With the person agent and IP deal with, you’ll be able to fit them to your web page information thru a DNS look up or IP fit. If they don’t fit precisely, you may have a malicious bot making an attempt to pose as the real one.

Then, you’ll be able to block the imposter by way of adjusting permissions the use of your robots.txt web page tag.

Abstract

Internet crawlers are helpful for engines like google and essential for entrepreneurs to know.

Making sure that your web page is crawled as it should be by way of the proper crawlers is essential to your small business’s good fortune. By means of maintaining a crawler checklist, you’ll be able to know which of them to be careful for once they seem to your web page log.

As you practice the suggestions from industrial crawlers and make stronger your web page’s content material and pace, you’ll make it more uncomplicated for crawlers to get right of entry to your web page and index the proper knowledge for engines like google and the shoppers searching for it.

The submit Crawler Listing: Internet Crawler Bots and How To Leverage Them for Good fortune gave the impression first on Kinsta®.

WP Hosting