Gaining visibility in your website online via rating smartly on Seek Engine Effects Pages (SERPs) is a objective price pursuing. On the other hand, there are possibly a couple of pages for your website online that you’d fairly no longer direct site visitors in opposition to, comparable to your staging space or replica posts.

Thankfully, there’s a easy approach to do this for your WordPress website online. The usage of a robots.txt document will steer engines like google (and due to this fact guests) clear of any content material you need to cover, and may even assist to strengthen your Seek Engine Optimization (search engine marketing) efforts.

On this put up, we’ll mean you can perceive what a robots.txt document is and the way it relates in your website online’s search engine marketing. Then we’ll display you the way to temporarily and simply create and edit this document in WordPress, the use of the Yoast SEO plugin. Let’s dive in!

An Advent to robots.txt

In a nutshell, robots.txt is a undeniable textual content document that’s saved in a web site’s primary listing. Its serve as is to provide directions to look engine crawlers ahead of they discover and index your web site’s pages.

With a view to perceive robots.txt, you want to understand a little bit about search engine crawlers. Those are methods (or ‘bots’) that talk over with internet sites to be told about their content material. How crawlers index your website online’s pages determines whether or not they finally end up on SERPs (and the way extremely they rank).

When a seek engine crawler arrives at a web site, the very first thing it does is take a look at for a robots.txt document within the website online’s primary listing. If it reveals one, it’s going to be mindful of the directions indexed inside, and observe them when exploring the website online.

If there isn’t a robots.txt document, the bot will merely move slowly and index all of the website online (or as a lot of the website online as it may well to find). This isn’t at all times an issue, however there are a number of scenarios wherein it would turn out destructive in your website online and its search engine marketing.

Why robots.txt Issues for search engine marketing

Some of the commonplace makes use of of robots.txt is to cover web site content material from engines like google. This could also be known as ‘disallowing’ bots from crawling sure pages. There are a couple of causes you might need to do this.

The primary explanation why is to give protection to your SERP scores. Reproduction content material tends to confuse search engine crawlers since they are able to’t listing all of the copies on SERPs and due to this fact have to select which model to prioritize. This can result in your content material competing with itself for most sensible scores, which is counterproductive.

One more reason you might need to cover content material from engines like google is to stay them from showing sections of your web site that you need to stay non-public – comparable to your staging space or non-public members-only boards. Encountering those pages may also be complicated for customers, and it may well pull site visitors clear of the remainder of your website online.

Along with disallowing bots from exploring sure spaces of your website online, you’ll be able to additionally specify a ‘move slowly extend’ on your robots.txt document. This will likely save you server overloads caused by bots loading and crawling a couple of pages for your website online immediately. It may additionally minimize down on Connection timed out mistakes, which may also be very irritating in your customers.

How one can Create and Edit robots.txt in WordPress (In 3 Steps)

Thankfully, the Yoast SEO plugin makes it simple to create and edit your WordPress website online’s robots.txt document. The stairs beneath will suppose that you’ve got already installed and activated Yoast SEO for your website online.

Step 1: Get entry to the Yoast search engine marketing Document Editor

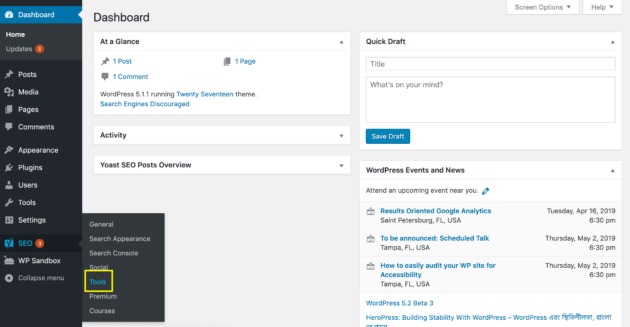

One method to create or edit your robots.txt document is via the use of Yoast’s Document Editor instrument. To get admission to it, talk over with your WordPress admin dashboard and navigate to Yoast search engine marketing > Equipment within the sidebar:

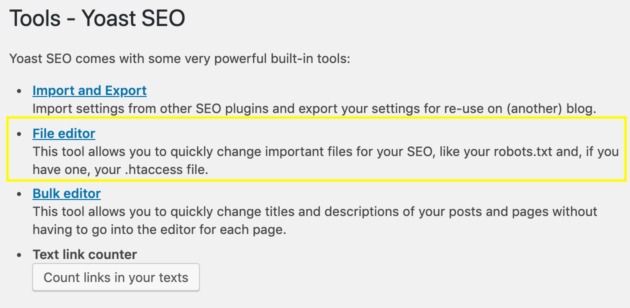

At the ensuing display screen, choose Document Editor from the listing of gear:

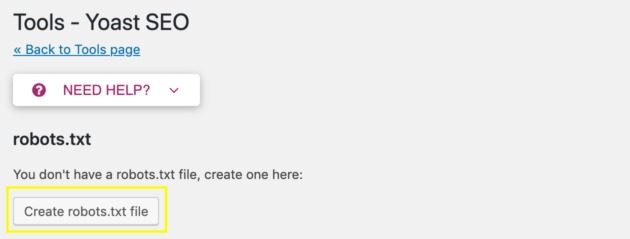

If you have already got a robots.txt document, this may occasionally open a textual content editor the place you’ll be able to make adjustments to it. When you don’t have a robots.txt document, you’ll see this button as an alternative:

Click on on it to routinely generate a robots.txt document and reserve it in your web site’s primary listing. There are two advantages to putting in your robots.txt document this fashion.

First, you’ll be able to ensure that the document is stored in the precise position, which is very important for making sure that seek engine crawlers can to find it. The document can be named correctly, in all decrease case. That is essential as a result of seek engine crawlers are case delicate, and won’t acknowledge information with names comparable to Robots.txt.

Step 2: Structure Your robots.txt Document

With a view to keep up a correspondence successfully with seek engine crawlers, you’ll want to be certain that your robots.txt document is formatted as it should be. All robots.txt information listing a ‘user-agent’, after which ‘directives’ for that agent to observe.

A user-agent is a particular seek engine crawler you need to provide directions to. Some common ones come with: bingbot, googlebot, slurp (Yahoo), and yandex. Directives are the directions you need the hunt engine crawlers to observe. We’ve already mentioned two types of directives on this put up: disallow and move slowly extend.

While you put those two parts in combination, you get a whole robots.txt document. It may be as quick as simply two traces. Right here’s our own robots.txt document for instance:

You’ll to find extra examples via merely typing in a website online’s URL adopted via /robots.txt (e.g., instance.com/robots.txt).

Any other essential formatting part is the ‘wild card.’ This can be a image used to signify a couple of seek engine crawlers immediately. In our robots.txt document above, the asterisk (*) stands in for all user-agents, so the directives following it’s going to follow to any bot that reads them.

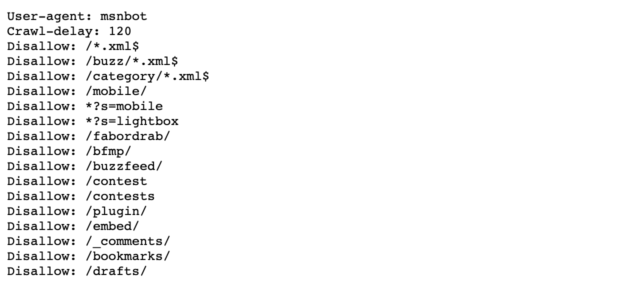

The opposite commonly-used wild card is the buck ($) image. It may stand in for the tip of a URL, and is used for giving directives that are meant to follow to all pages with a particular URL finishing. Right here’s BuzzFeed’s robots.txt file for instance:

Right here, the website online is the use of the $ wild card to dam seek engine crawlers from all .xml information. To your personal robots.txt document, you’ll be able to come with as many directives, user-agents, and wild playing cards as you prefer, in no matter aggregate most nearly fits your wishes.

Step 3: Use robots.txt Instructions to Direct Seek Engine Crawlers

Now that you know the way to create and structure your robots.txt document, you’ll be able to in reality get started giving directions to look engine bots. There are 4 commonplace directives you’ll be able to come with on your robots.txt document:

- Disallow. Tells seek engine crawlers to not discover and index the desired web page or pages.

- Permit. Permits the crawling and indexing of subfolders which might be disallowed via a prior directive. This command solely works with Googlebot.

- Move slowly Extend. Instructs seek engine crawlers to watch for a specified period of time ahead of loading the web page in query.

- Sitemap. Provides seek engine crawlers the positioning of a sitemap that provides additional information, which can assist the bots move slowly your website online extra successfully. If you select to make use of this directive, it will have to be positioned on the very finish of your document.

None of those directives are strictly required in your robots.txt document. Actually, you’ll be able to to find arguments for or against using any of them.

On the very least, there’s no hurt in disallowing bots from crawling pages you completely don’t need on SERPs and stating your sitemap. Even though you’re going to make use of different gear to take care of a few of these duties, your robots.txt document can give a backup to verify the directives are performed.

Conclusion

There are lots of causes you might need to give directions to look engine crawlers. Whether or not you want to cover sure spaces of your website online from SERPs, arrange a move slowly extend, or indicate the positioning of your sitemap, your robots.txt document can get the process executed.

To create and edit your robots.txt document with Yoast SEO, you’ll need to:

- Get entry to the Yoast search engine marketing Document Editor.

- Structure your robots.txt document.

- Use robots.txt instructions to direct seek engine crawlers.

Do you’ve got any questions on the use of robots.txt to fortify your search engine marketing? Ask away within the feedback phase beneath!

Symbol credit score: Pexels.

The put up Understanding robots.txt: Why It Matters and How to Use It seemed first on Torque.

WordPress Agency